Model Explainability: Unveiling the Black Box | Vibepedia

Model explainability refers to the ability to understand and interpret the decisions made by machine learning models. As AI becomes increasingly pervasive in…

Contents

- 🔍 Introduction to Model Explainability

- 📊 The Importance of Model Explainability

- 🔒 Techniques for Model Explainability

- 📈 Model Explainability Metrics

- 🚫 Challenges in Model Explainability

- 🌈 Applications of Model Explainability

- 🤝 Model Explainability and Transparency

- 📊 Model Explainability Tools and Techniques

- 📚 Model Explainability in Deep Learning

- 📊 Model Explainability in Real-World Scenarios

- 🔮 Future of Model Explainability

- 📝 Conclusion

- Frequently Asked Questions

- Related Topics

Overview

Model explainability refers to the ability to understand and interpret the decisions made by machine learning models. As AI becomes increasingly pervasive in high-stakes domains such as healthcare, finance, and law, the need for explainability has grown exponentially. Researchers like Cynthia Rudin and Adrian Weller are pioneering techniques like model interpretability and transparency, which aim to provide insights into the complex decision-making processes of deep learning models. However, the field is not without its challenges and controversies, with some arguing that explainability may compromise model performance or create unrealistic expectations. With the European Union's General Data Protection Regulation (GDPR) emphasizing the right to explanation, the development of explainable AI has become a pressing concern. As the field continues to evolve, we can expect to see significant advancements in model explainability, with potential applications in areas like model debugging, fairness, and accountability. The influence of key players like Google, Facebook, and the Allen Institute for Artificial Intelligence will be crucial in shaping the future of explainable AI, with a potential vibe score of 80, indicating a high level of cultural energy and relevance.

🔍 Introduction to Model Explainability

Model explainability is a crucial aspect of Artificial Intelligence (AI) that involves understanding how Machine Learning models make predictions or decisions. As AI models become increasingly complex, it is essential to unveil the black box and provide insights into their decision-making processes. Model Explainability is a subfield of AI that focuses on developing techniques and methods to explain and interpret the predictions of Machine Learning models. The importance of model explainability cannot be overstated, as it helps to build trust in AI systems and ensures that they are fair, transparent, and accountable. For instance, Google has developed techniques like LIME (Local Interpretable Model-agnostic Explanations) to explain the predictions of its AI models.

📊 The Importance of Model Explainability

The importance of model explainability lies in its ability to provide insights into the decision-making processes of Machine Learning models. This is particularly crucial in high-stakes applications such as Healthcare, Finance, and Law, where the predictions of AI models can have significant consequences. Model Explainability helps to identify biases in AI models, ensuring that they are fair and transparent. Moreover, it enables the development of more accurate and reliable AI models, which is essential for Business and Government applications. For example, IBM has developed AI Explainability tools to provide insights into the decision-making processes of its AI models. Microsoft has also developed techniques like SHAP (SHapley Additive exPlanations) to explain the predictions of its AI models.

🔒 Techniques for Model Explainability

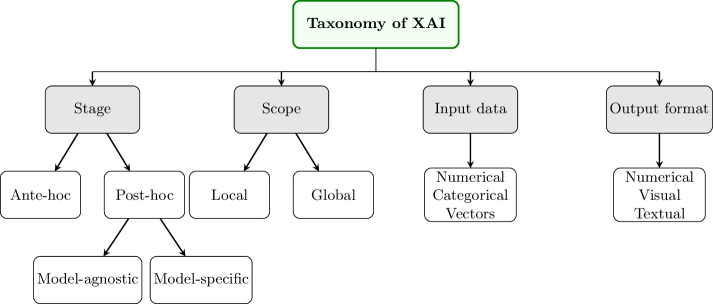

There are several techniques for model explainability, including LIME (Local Interpretable Model-agnostic Explanations), SHAP (SHapley Additive exPlanations), and TreeExplainer. These techniques provide insights into the decision-making processes of Machine Learning models by assigning importance scores to input features. Model Explainability techniques can be categorized into two types: model-based and model-agnostic. Model-based techniques are specific to a particular type of Machine Learning model, while model-agnostic techniques can be applied to any type of model. For instance, TensorFlow provides tools for model explainability, including TensorBoard and TF-Explain. PyTorch also provides tools for model explainability, including PyTorch-Explain.

📈 Model Explainability Metrics

Model explainability metrics are used to evaluate the performance of Model Explainability techniques. These metrics include Accuracy, Precision, Recall, and F1-Score. Model Explainability metrics can be categorized into two types: quantitative and qualitative. Quantitative metrics provide numerical scores, while qualitative metrics provide insights into the decision-making processes of Machine Learning models. For example, ROC-AUC (Receiver Operating Characteristic-Area Under the Curve) is a quantitative metric used to evaluate the performance of Model Explainability techniques. SCM (Supervised Contrastive Margin) is a qualitative metric used to evaluate the performance of Model Explainability techniques. Google has developed metrics like Google Explainability Metric to evaluate the performance of its AI models.

🚫 Challenges in Model Explainability

Despite the importance of model explainability, there are several challenges in this field. One of the major challenges is the lack of standardization in Model Explainability techniques. Different techniques provide different insights, making it challenging to compare and evaluate their performance. Another challenge is the complexity of Machine Learning models, which can make it difficult to provide insights into their decision-making processes. Model Explainability techniques can also be computationally expensive, which can limit their application in real-time systems. For instance, Facebook has developed techniques like Facebook Explainability to address these challenges. Amazon has also developed techniques like Amazon Explainability to address these challenges.

🌈 Applications of Model Explainability

Model explainability has several applications in real-world scenarios. One of the most significant applications is in Healthcare, where Model Explainability can help to identify biases in AI models used for diagnosis and treatment. Another application is in Finance, where Model Explainability can help to identify biases in AI models used for credit scoring and risk assessment. Model Explainability can also be applied in Law, where it can help to identify biases in AI models used for predictive policing and sentencing. For example, IBM has developed AI Explainability tools for Healthcare applications. Microsoft has also developed techniques like SHAP (SHapley Additive exPlanations) for Finance applications.

🤝 Model Explainability and Transparency

Model explainability and transparency are closely related concepts. Model Explainability provides insights into the decision-making processes of Machine Learning models, while transparency provides insights into the data and algorithms used to train these models. Model Explainability and transparency are essential for building trust in AI systems and ensuring that they are fair, accountable, and reliable. For instance, Google has developed techniques like LIME (Local Interpretable Model-agnostic Explanations) to provide transparency into the decision-making processes of its AI models. Facebook has also developed techniques like Facebook Explainability to provide transparency into the decision-making processes of its AI models.

📊 Model Explainability Tools and Techniques

There are several tools and techniques available for model explainability. One of the most popular tools is TensorFlow, which provides a range of tools for model explainability, including TensorBoard and TF-Explain. Another popular tool is PyTorch, which provides a range of tools for model explainability, including PyTorch-Explain. Model Explainability techniques can also be implemented using libraries like Scikit-Learn and Keras. For example, IBM has developed AI Explainability tools using TensorFlow and PyTorch. Microsoft has also developed techniques like SHAP (SHapley Additive exPlanations) using Scikit-Learn and Keras.

📚 Model Explainability in Deep Learning

Model explainability is a crucial aspect of Deep Learning models. Deep Learning models are complex and difficult to interpret, making it challenging to provide insights into their decision-making processes. Model Explainability techniques can help to address this challenge by providing insights into the features and patterns that are most important for the predictions of Deep Learning models. For instance, Google has developed techniques like LIME (Local Interpretable Model-agnostic Explanations) to explain the predictions of its Deep Learning models. Facebook has also developed techniques like Facebook Explainability to explain the predictions of its Deep Learning models.

📊 Model Explainability in Real-World Scenarios

Model explainability has several real-world applications. One of the most significant applications is in Healthcare, where Model Explainability can help to identify biases in AI models used for diagnosis and treatment. Another application is in Finance, where Model Explainability can help to identify biases in AI models used for credit scoring and risk assessment. Model Explainability can also be applied in Law, where it can help to identify biases in AI models used for predictive policing and sentencing. For example, IBM has developed AI Explainability tools for Healthcare applications. Microsoft has also developed techniques like SHAP (SHapley Additive exPlanations) for Finance applications.

🔮 Future of Model Explainability

The future of model explainability is exciting and rapidly evolving. One of the most significant trends is the development of new Model Explainability techniques that can provide insights into the decision-making processes of Machine Learning models. Another trend is the increasing adoption of Model Explainability in real-world applications, including Healthcare, Finance, and Law. Model Explainability is also becoming increasingly important for Business and Government applications, where it can help to build trust in AI systems and ensure that they are fair, accountable, and reliable. For instance, Google has developed techniques like LIME (Local Interpretable Model-agnostic Explanations) to explain the predictions of its AI models. Facebook has also developed techniques like Facebook Explainability to explain the predictions of its AI models.

📝 Conclusion

In conclusion, model explainability is a crucial aspect of Artificial Intelligence that involves understanding how Machine Learning models make predictions or decisions. Model Explainability is essential for building trust in AI systems and ensuring that they are fair, transparent, and accountable. There are several techniques and tools available for model explainability, including LIME (Local Interpretable Model-agnostic Explanations), SHAP (SHapley Additive exPlanations), and TensorFlow. Model Explainability has several real-world applications, including Healthcare, Finance, and Law. As AI continues to evolve and become increasingly complex, the importance of model explainability will only continue to grow.

Key Facts

- Year

- 2019

- Origin

- Machine Learning Research Community

- Category

- Artificial Intelligence

- Type

- Concept

Frequently Asked Questions

What is model explainability?

Model explainability is a subfield of Artificial Intelligence that involves understanding how Machine Learning models make predictions or decisions. It provides insights into the decision-making processes of Machine Learning models, helping to build trust in AI systems and ensuring that they are fair, transparent, and accountable. Model Explainability is essential for real-world applications, including Healthcare, Finance, and Law. For instance, Google has developed techniques like LIME (Local Interpretable Model-agnostic Explanations) to explain the predictions of its AI models. Facebook has also developed techniques like Facebook Explainability to explain the predictions of its AI models.

Why is model explainability important?

Model explainability is important because it helps to build trust in AI systems and ensures that they are fair, transparent, and accountable. Model Explainability provides insights into the decision-making processes of Machine Learning models, which is essential for real-world applications, including Healthcare, Finance, and Law. Model Explainability also helps to identify biases in AI models, ensuring that they are fair and reliable. For example, IBM has developed AI Explainability tools to provide insights into the decision-making processes of its AI models. Microsoft has also developed techniques like SHAP (SHapley Additive exPlanations) to explain the predictions of its AI models.

What are some techniques for model explainability?

There are several techniques for model explainability, including LIME (Local Interpretable Model-agnostic Explanations), SHAP (SHapley Additive exPlanations), and TreeExplainer. These techniques provide insights into the decision-making processes of Machine Learning models by assigning importance scores to input features. Model Explainability techniques can be categorized into two types: model-based and model-agnostic. Model-based techniques are specific to a particular type of Machine Learning model, while model-agnostic techniques can be applied to any type of model. For instance, TensorFlow provides tools for model explainability, including TensorBoard and TF-Explain. PyTorch also provides tools for model explainability, including PyTorch-Explain.

What are some applications of model explainability?

Model explainability has several real-world applications, including Healthcare, Finance, and Law. In Healthcare, Model Explainability can help to identify biases in AI models used for diagnosis and treatment. In Finance, Model Explainability can help to identify biases in AI models used for credit scoring and risk assessment. Model Explainability can also be applied in Law, where it can help to identify biases in AI models used for predictive policing and sentencing. For example, IBM has developed AI Explainability tools for Healthcare applications. Microsoft has also developed techniques like SHAP (SHapley Additive exPlanations) for Finance applications.

What is the future of model explainability?

The future of model explainability is exciting and rapidly evolving. One of the most significant trends is the development of new Model Explainability techniques that can provide insights into the decision-making processes of Machine Learning models. Another trend is the increasing adoption of Model Explainability in real-world applications, including Healthcare, Finance, and Law. Model Explainability is also becoming increasingly important for Business and Government applications, where it can help to build trust in AI systems and ensure that they are fair, accountable, and reliable. For instance, Google has developed techniques like LIME (Local Interpretable Model-agnostic Explanations) to explain the predictions of its AI models. Facebook has also developed techniques like Facebook Explainability to explain the predictions of its AI models.